On the internet, there are algorithms all around you. In fact, you are reading this blog because one algorithm brought it to you among others for you to click and you did.

(The algorithm has already taken note of it.)

When you open your Facebook account, an algorithm decides what you see on your timeline.

When you search for a photo in your phone gallery, an algorithm does the finding for you. It might even make a video for you.

When you buy something online, an algorithm sets the ideal deal price for you and it also watches your bank account transactions for fraud.

The stock market is full of algorithms trading with (other) algorithms.

Well, you might be interested in knowing how these little algorithmic bots- shaping your world- work. Especially, when they don’t!

(Let’s call these algorithms as bots for the super-amazing work they do in seconds!)

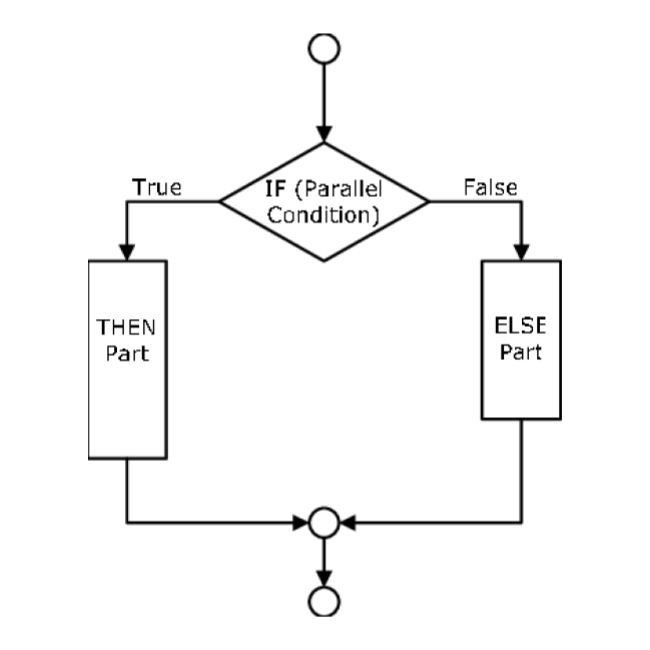

In the olden days, humans build algorithmic bots by giving them instructions that they could explain. For example

If ButtonX is Clicked {

**********

} else {

**********

}

But there are many problems that are too long and difficult for humans to write simple code instructions for.

- There are a gazillion financial transactions occurring per second. Which ones amongst them are fraudulent?

- There are octillion videos on YouTube, which few of them should users see as recommendations? Which should not be allowed on the site at all?

- What is the maximum price a specific user will pay for a specific airline seat

Algorithmic bots do give answers to such questions. Not the perfect and exact answers, but much better than a human could do!

No one knows exactly how these bots work more and more. Not even the humans who built them, nor even those who will build them!

The companies who built these bots don’t want to talk about how they work because the bots are extremely valuable employees. And how they bring the built is a fiercely guarded trade secret.

Right now the cutting-edge is most likely like linear algebra but what the current hotness is on any particular site and how the bots work is a bit like- I don’t know and no one knows! And it will always be so.

So, let’s talk about one of the unusual but acceptable ways of how bots can be built without understanding how their brains work!

How humans can build AI-efficient algorithms (bots)?

Say you want a bot to identify what is in a picture, is it a dog or a cat? Well, this task is very easy for humans, even for little baby humans but it’s impossible for humans to explain it to bots in bot-language how to do it!

Because humans simply know what is a dog and a cat! A human can say in words the different characteristics of the two but bots don’t understand words.

- So to get a bot who can do this sorting, humans don’t build it on their own. Rather they build:

-

- A builder bot- who builds several other bots &

- A teacher bot- that teaches the newly built bots.

-

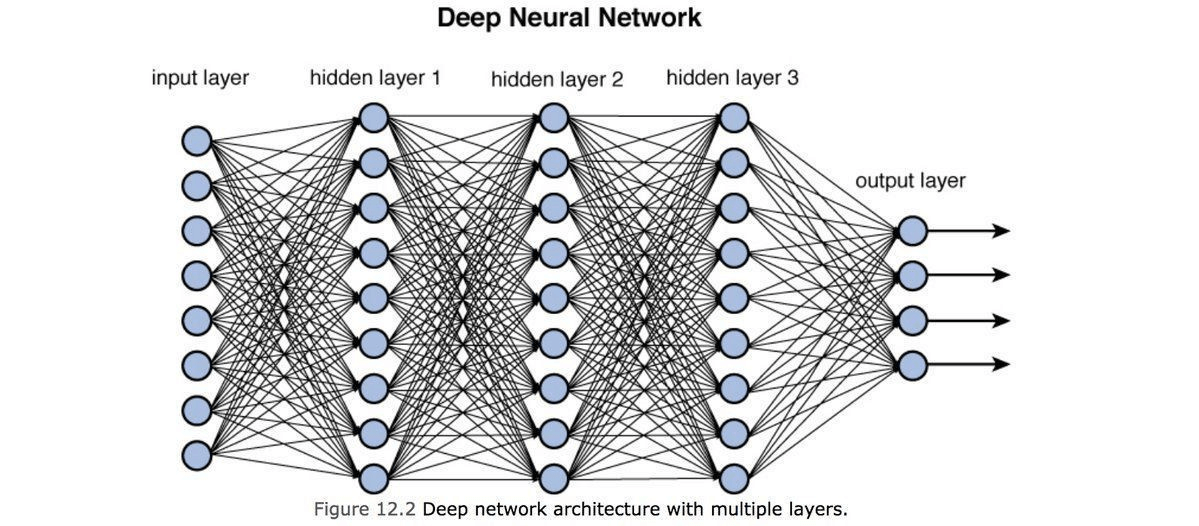

- The builder bot builds bots, though it is not good at it. Initially, it connects the wires and modules in the bot’s brain almost at random. This leads to very special (awkward) bots sent to the teacher bot to teach.

- Of course, the teacher bot itself can not identify the difference between a dog and a cat either, if humans could build the teacher bot in such manner, the problem would have been solved.

- Instead, the human gives the teacher bot a bunch of dog photos and cat photos and an answer key to which is what. The teacher bot can not teach, but it can Test.

- This test is not just a mere bunch of cat-dog photos, rather it’s millions of photos that identify a cat from a dog with a clear answer key. It’s like an infinite if-else loop that is executed every time the test is run.

- The adorkable student bots try very hard to clear the test, but they fail miserably and it’s not really their fault. They were built like that.

- The bots return to the builder bot and those who got bad grades are recycled and the ones who did some better are kept aside.

- The builder bot makes some changes and improvements on the bots with new combinations and sends them back to the teacher bot.

- Back to school, they go!

- The teacher bot again gives them the dog-cat distinguishment test. It hence keeps on adding more conditional tests in the programming of the bot’s brain.

- After the test, the bots are given grades and are sent back to the builder bot, bifurcated and re-wired, and better bots again sent to the teacher bot.

- Every time, newer conditional nodes are added to the bifurcation neural model that trains the bots to distinguish between the two animals. With each iteration, the resultant best student bot is increasing its efficiency to identify whether a given image is of a cat or a dog.

- The cycle is repeated n number of times. The teacher sends student bots with results back to the builder bot, which recycles poorly graded bots and modulates the better scoring bots. The builder bot then again sends the bots to the teacher bot…

And again, and again, and again, and again,………..

Now, a builder that builds at random, and a teacher that tests not teaches, and the students that don’t learn and are what they are- in theory, shouldn’t work. But, in practice, It does!

Partly because in every iteration, the builder-house-slaughterhouse keeps the best and discards the rest.

The teacher bot isn’t overseeing an old tiny school of a dozen or so students; rather, an infinite warehouse of thousands of students.

And how many times the test-build, test-build series is repeated?

As many as necessary (infinitely)!

Eventually, a student bot emerges that can barely identify between dogs or cats. Say, it scores less than 20% grades in the test.

As this bot is copied and changed, slowly, the average test-score rises and the grade required to sustain the test rises higher and higher.

Eventually, from the infinite student warehouse (slaughterhouse), a student bot will emerge who can tell a dog from a cat from a photo it has never seen before, pretty well! (attains scores more than 95%)

But how does the student bot does this? Neither the teacher bot knows, nor the builder bot, nor the human overseer can understand. Most importantly, not even the student bot itself can tell how it can recognize the dog from a cat.

After several successful iterations, the changes in the wirings of the bot’s brain is incredibly complicated. And while an individual line of code may be understood, and clusters of code, general purposed, vaguely grasped, the whole is beyond!

Nonetheless, it Works!

But, this is frustrating, especially because the student bot is very good at exactly the kind of questions it has been taught to. It’s great with photos, but with videos, inverted photos, things that are not dogs or cats, it gets baffled.

Since the teacher bot can not teach, the human overseer can give the teacher bot more questions and get the tests long including questions that bot still isn’t good with answering.

This is important, this is actually why companies are obsessed with collecting data.

More data means longer tests, which means better bots.

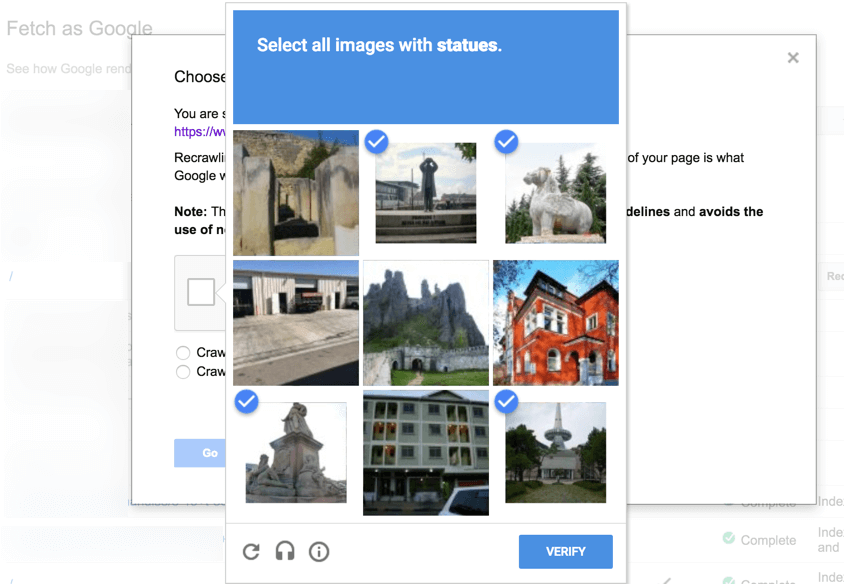

So when you get the “Are you human?” tests on google, you are not only proving that you are not a bot, but are also helping humans build the tests that can tell a bot what are lakes, mountains, traffic signals, horses from humans, etc.

Now you understand what could the recently asked traffic questions could be useful for building? (AI-bot driven vehicles)

There is another kind of test that builds itself- i.e. the tests on the human!

For example, say hypothetically a human overseer wants users to keep watching his website videos for as long as possible.

Well, it is easy to measure how long a user stays online to watch a video.

Thus, a teacher bot gives each student bot a bunch of users to track how long they watch videos online and what kinds of videos they like.

The student bots do their best to show the users what they would like based on their previously watched videos.

The longer the time spent on watching videos, the higher the score the bot acquires.

Build-Test-Repeat

A million cycles later, a student bot emerges that is pretty good at keeping the users watching. At least compared to what the humans could do.

But when people ask how does the Netflix algorithm select videos? There isn’t a great answer other than pointing to the bot and the user-data it had access to and most vitally, how the human overseer directs the teacher bot to score tests. Because that’s what the student bot is trying to be good at to survive.

But what the bot is thinking? Or how it thinks is not really knowable!

As our algorithmic buddies are everywhere and not going anywhere.

All that is knowable is the successful student bot gets to be the algorithm because it is 0.001% better than the previous bot at the test that human decides.

So everywhere on the internet, behind the screen are tests to increase user-interaction, set prices just right to maximize revenue, or pick the posts from all your friends you like the most, articles people share the most, or whatever.

What’s testable is teachable, and a student bot will graduate from the warehouse to become the algorithm of its domain. At least for a little while, till the time a better bot overtakes it.

Today we are increasingly in a position only to guide the bots to test, and we need to get comfortable with that.